CDSA

M&E Journal: Cloud Control Options for Content Production

Story Highlights

By Usman Shakeel, Worldwide Technical Leader, Media and Entertainment, Amazon Web Services –

M&E customers are using the cloud for a wide range of use cases, including many aspects of content production and post, and large parts of the business-to-consumer, camera-to-screen supply chain. Managed infrastructure services and rich platform features remove the need for customers to do undifferentiated heavy lifting, specifically focusing on creating and delivering differentiated customer experiences. According to Gartner: “Through 2020, public cloud Infrastructure as a Service (IaaS) workloads will suffer at least 60 percent fewer security incidents than those in traditional data centers.”

The cloud platform must be built to support a wide range of mission- and revenue-critical industry workloads — including healthcare, financial services, federal government intelligence and defense — with all customers leveraging the same infrastructure platform. Specific to M&E, the Motion Picture Association of America (MPAA) created a set of content security guidelines for storage and processing workloads on the cloud. These best practices are made up of selective requirements from a set of industry security standards based on ISO, OWASP, CSA, PCI, NIST800-53, and others.

Alignment with MPAA guidelines requires a self-assessment or inspection without a formal audit process. In 2015, Amazon Web Services (AWS) published a detailed security mapping report that shows how alignment with the MPAA controls is achieved. Media customers can use the AWS MPAA guidance document to augment their risk assessment and evaluation of high-valued content environments on AWS.

In addition to the MPAA best practices guidelines, most major studios have their own set of security requirements. Service providers are required to follow these requirements in their application environments for any specific studio project. AWS has worked with a third-party auditor to assess security controls and build a reference template of those security controls. This template is based on the security standards of major studios and covers common media workloads such as visual effects/rendering, asset management, and archiving. Studios can leverage the cloud for content production with their service providers without having to move content outside their isolated cloud environment, making the entire workflow more securely controlled and auditable.

Here’s a look at the essential cloud security controls needed to aid studios and their affiliates, in order to achieve a secure content workflow.

Shared responsibility models (SRMs)

Moving these workflows to the cloud relies upon a model of shared responsibility between the customer (content owner or service provider) and the cloud provider. This shared model can help relieve customers’ operational burden as the cloud provider manages, and controls, the components from the host operating system, from the virtualization layer down to the physical security of the hosting facilities. The customer assumes responsibility and management of the guest operating system, associated application software, and configuration of the cloud operator-provided security controls.

Customers can then use the cloud provider’s control and compliance documentation and relevant third-party industry templates to perform their control evaluation and verification procedures as required.

Content locality

An AWS Region is a physical location in the world where multiple Availability Zones exist. Availability Zones consist of one or more discrete data centers, each with redundant power, networking, and connectivity, housed in separate facilities.

Whenever a service is instantiated or content is stored into an AWS Region, it stays in the same Region. This allows customers to bind their content and data to specific regions for content rights or data sovereignty reasons.

Accounts structure and governance

Access and infrastructure isolation across project environments (or customers in the case of service provider) is required. A cloud service provider must offer flexible control options for accounts managing the underlying resources. These options are designed to help provide cost allocation, agility, and security. Multiple accounts can provide the highest level of resource and security isolation.

AWS customers can use organizational units (OUs) with AWS Organizations to create hierarchical and logical account groupings. Customers can also use service control policies (SCPs) to filter and restrict at the account level what services and actions the users, groups, and roles in those accounts can do. The graphic shows a Cross-Account Manager solution that automates the provisioning of Identity and Access Management (IAM) roles and policies for cross-account access. In many cases, service providers such as VFX/post-production houses can leverage this automation to create a separate account for each studio project.

Within a single account, customers should implement best practices around key management, user accounts and identity federation.

An appropriately segregated network

An appropriately segregated network

To keep different project environments logically isolated, it is a best practice to have separate Virtual Private Clouds per project. There are several best practices for VPC design based on the underlying customer scenarios. One example is to restrict content access based on the VPC application function, as shown in the figure below.

Additionally, controls such as individual virtual server logical firewalls, routing tables, and network access control lists can be configured separately for each environment for isolation.

Storage security

Securing storage appropriately is a key requirement in all media workflows. AWS storage services (e.g., Amazon S3, Amazon Glacier, Amazon Elastic Block Store and Amazon Elastic File System) offer several levels of security controls, such as S3 VPC endpoints or AWS Direct Connect/HTTPS endpoints for access and snowball for ingest, as well as comprehensive individual object access policies. Storage services also offer free encryption with customer- provided keys and integration with AWS Key Management Service (AWS KMS). One concern that content owners often have with cloud-based persistent storage is the deletion of content after it has been processed.

When a storage device is no longer needed, AWS leverages a decommissioning process that uses the techniques detailed in DoD 5220.22-M (“National Industrial Security Program Operating Manual “) and NIST 800-88 (“Guidelines for Media Sanitization”) to destroy data.

There are several best practices for both deleting content from block volumes after the content has been processed and for processing the content directly from Amazon S3. Additionally, services like AWS KMS provide the capability to build an end-to-end encrypted workflow with managed key distribution across different processing nodes including customer-provided keys.

Virtual instance security

In addition to the security tools provided with AWS compute services, there are several best practices that if implemented yield a significant gain in operational efficiency and control. Infrastructure automation allows for infrastructure recycling after every specific project. This allows infrastructure to be updated with the latest OS patches, applications and OS updates. Amazon EC2 Systems Manager helps customers automatically collect software inventory, apply OS patches, create system images and configure the operating systems.

Ongoing audit and compliance

A customer should be able to use an underlying cloud provider’s security template for a specific environment to assess, audit and evaluate the configurations of their cloud environment by continuously monitoring resource configurations, allowing customers to automate the evaluation of recorded configurations against desired configurations.

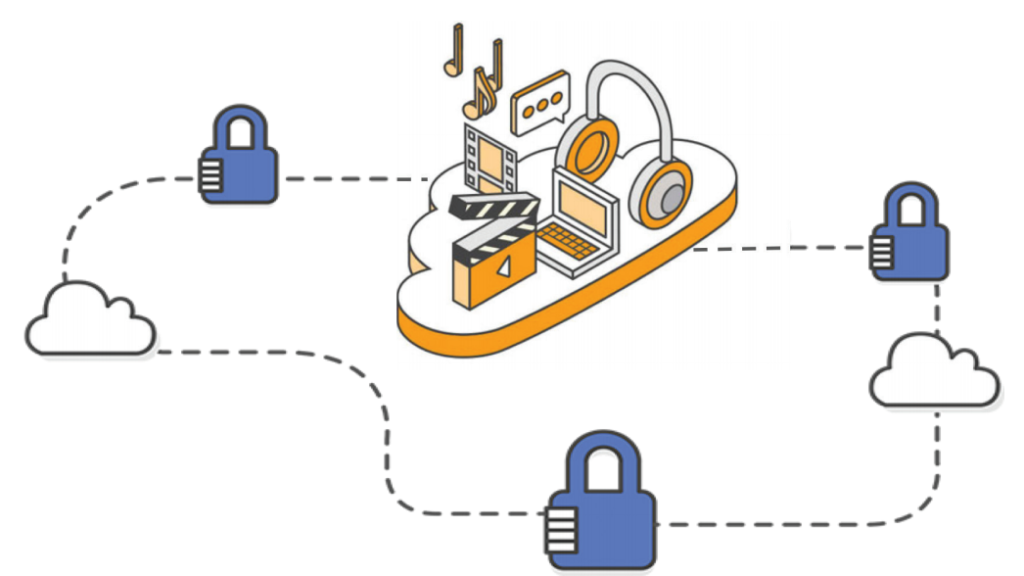

Securely leveraging third-party PaaS/SaaS on the cloud

The content owner can encrypt the content in the object store using their own AWS KMSkey. They can decide on a scheme with respect to the primary keys and data keys. The content owner then creates an IAM role that provides read permissions from its S3 bucket to the authenticated/ authorized user. The IAM role also contains the permission to be able to call the AWS KMS service and get a data key for an encrypted content. The IAM role is passed to the SaaS/PaaS environment via cross-account access that must be explicitly done by the content owner. The processing instances in the SaaS/ PaaS environment are launched with an IAM profile with the above permissions. This allows for a seamless key transfer and encryption of content end to end within the workflow. If the content owner wants to remove the service provider’s access to the content, it can remove the IAM roles.

VFX/rendering/batch hybrid workflow

Many VFX houses have limited on-premises infrastructure and want to leverage cloudbased infrastructure as an extension of their onpremises renderfarm while having to meet the studio’s security requirements. We’ve worked with a third-party auditor to create a reference template for these workloads based on studio security requirements that can be used by the VFX house to create a security control-set for their relevant environment. If object storage (persistent storage) is used, the content should be encrypted on the client side before being transferred via a secure channel. Once the output is produced, it can be encrypted in the cloud and then sent back to the VFX house.

Parting thoughts

We’ve showcased some important security controls from the AWS Cloud Platform and relevant design patterns. These can enable content owners and their service providers to build secure content production environments. Besides several best practices discussed here, the common theme is that lesser or no movement of content enables more secure content production workflow.

Leveraging cloud with proper security controls enables content owners to have their content and its processing environments within their visibility in a secure environment easily. Various parties can then provide their services to the content in the content owner’s environment with full audit, end-to-end encryption, logging and monitoring.

—-

Click here to translate this article

Click here to download the complete .PDF version of this article

Click here to download the entire Winter 2018 M&E Journal