Connections

M&E Journal: Managing Five States of Data for the M&E Market

Story Highlights

Jeff Greenwald, HGST –

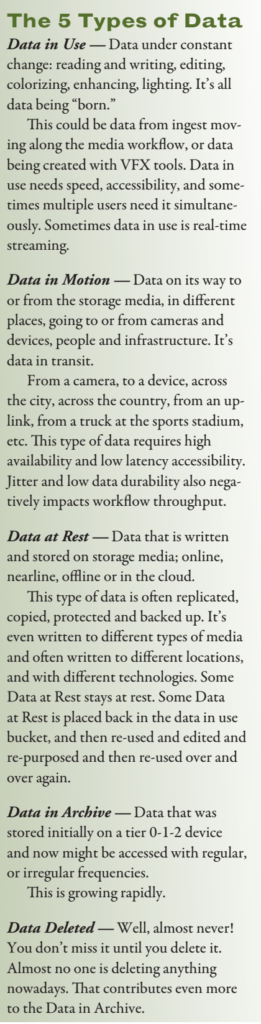

All media companies, whether a post house, a game developer, an advertiser, a CDN, a movie studio or a broadcaster wrestle with data in five states: Data in Use, Data in Motion, Data at Rest, Data in Archive and Data Deleted. The challenges in managing this wide variety data are straight forward and simple:

1) How do you lower the cost of that data? 2) How can you accelerate the performance of moving data through the media workflow faster? 3) How do you archive and protect your assets, the right assets, without over-storing, and without throwing out or deleting data you’ll regret losing later?

The Montreux Jazz Festival (MJF) in Switzerland, which got started 50 years ago in 1967 by a visionary named Claude Nobs, had great insight into its future data needs. Rare exceptions apart, the MJF organization video recorded all of its jazz, blues and rock concerts.

It recorded Ella Fitzgerald in 1971, David Bowie in 2002, and in-between, Nina Simone, Etta James, Marvin Gaye, B.B. King, and hundreds of other performers. MJF realized how important these video recordings were going to be, and understood that technology would ultimately allow assets to be be preserved, to be made available in subsequent generations for research, review, as well as enable potential distribution, collaboration and even monetization (once digital rights management issues had been clarified).

The MJF was one of the first organizations in the early 1990s to record in HD.

The MJF was one of the first organizations in the early 1990s to record in HD.

Today, in a partnership between the Claude Nobs Foundation and the Metamedia Center of EPFL (the Swiss Federal Institute of Technology in Lausanne), it is digitizing everything, and in parallel is exploring new technologies such as 3DAudio and 360º video captures, and many other innovations, including an object storage system for the 5,000 hours of uncompressed concerts archived.

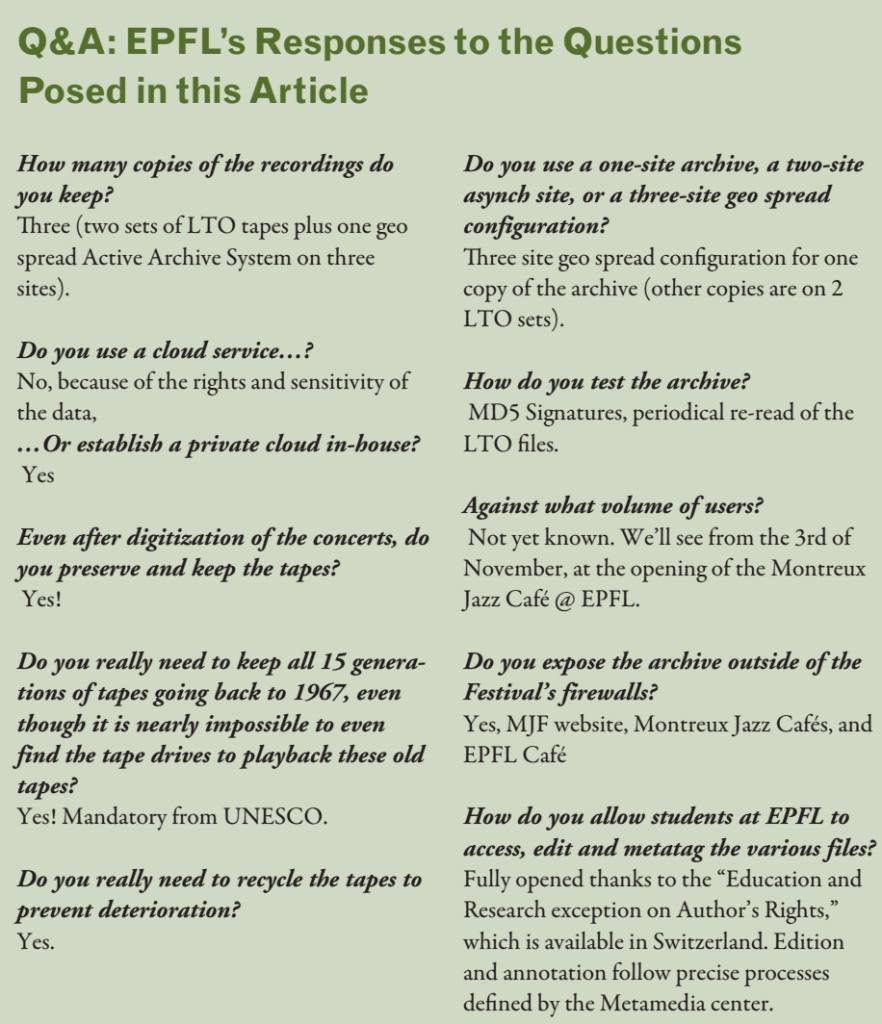

The collection is now registered at UNESCO, as part of the “Memory of the World” Program. The Metamedia Center had to manage and answer all the same kinds of questions routinely asked by many M&E companies:

- How many copies of the recordings should they keep?

- Should they use a cloud service, or establish a private cloud in-house?

- Even after digitization of the concerts, should they preserve and keep the tapes?

- Do they really need to keep all 15 generations of tape going back to 1967, even though it is nearly impossible to even find the tape drives to playback these old tapes?

- Do they really need to recycle the tapes to prevent deterioration?

- Do they use a one-site archive, a twosite asynch site, or a three site geographically spread (geo spread) configuration?

- How do they test the archive, and against what volume of users?

- Do they expose the archive outside of the Festival’s firewalls? How do they allow students at EPFL to access, edit and metatag the various files?

This deployment has taken nearly seven years from its infancy to finally going into production.

This deployment has taken nearly seven years from its infancy to finally going into production.

Today, they are nearly through this huge undertaking.

One thing though is clear: they needed to understand the five stages of data that are being generated every year for the last 50 years.

They had to create workflows that met their budgets, solved their performance targets, and met the organization’s SLAs within the technology constraints at hand.

In the end, achieving this goal was music to their ears and to their eyes, and to ours as well.

—

Click here to translate this article

Click here to download the complete .PDF version of this article

Click here to download the entire Spring 2016 M&E Journal